Evalion is a voice-agent evaluation platform that simulates real user interactions and normalizes results across scenarios, enabling teams to detect regressions, compare runs over time, and validate an agent’s readiness for production. Their platform enables teams to test voice agents by creating autonomous testing agents that conduct realistic conversations: interrupting mid-sentence, changing their mind, and expressing frustration just like real customers. This cookbook demonstrates how to evaluate voice agents by combining Evalion’s simulation capabilities with Braintrust. Voice agents require assessment beyond simple text accuracy: they must handle real-time latency constraints (< 500ms responses), manage interruptions gracefully, maintain context across multi-turn conversations, and deliver natural-sounding interactions. By the end of this guide, you’ll learn how to:Documentation Index

Fetch the complete documentation index at: https://braintrust.dev/docs/llms.txt

Use this file to discover all available pages before exploring further.

- Create test scenarios in Braintrust datasets

- Orchestrate automated voice simulations with Evalion’s API

- Extract and normalize voice-specific metrics (latency, CSAT, goal completion)

- Track evaluation results across iterations

Prerequisites

- A Braintrust account and API key

- Evalion backend access with API credentials

- Python 3.8+

Getting started

Export your API keys to your environment:Best practice is to export your API key as an environment variable. However, to make it easier to follow along with this cookbook, you can also hardcode it into the code below.

Creating test scenarios

We’ll create test scenarios for an airline customer service agent. Each scenario includes the customer’s situation (input) and success criteria (expected outcome). These range from straightforward bookings to high-stress cancellation handling. We’ll add all the scenarios to a dataset in Braintrust.Creating scorers

Evalion provides objective metrics (latency, duration) and subjective assessments (CSAT, clarity). We’ll normalize all scores to 0-1 for consistent tracking in Braintrust.Evalion API integration

TheEvalionAPIService class handles all interactions with Evalion’s API for creating agents, test setups, and running simulations. The task function orchestrates the workflow: creating agents in Evalion, running simulations, and extracting results. This enables reproducible evaluation across iterations.

The function performs the following steps:

- Creates a hosted agent in Evalion with your prompt

- Sets up test scenarios and personas

- Runs the voice simulation

- Polls for completion and retrieves results

- Cleans up temporary resources

Analyzing results

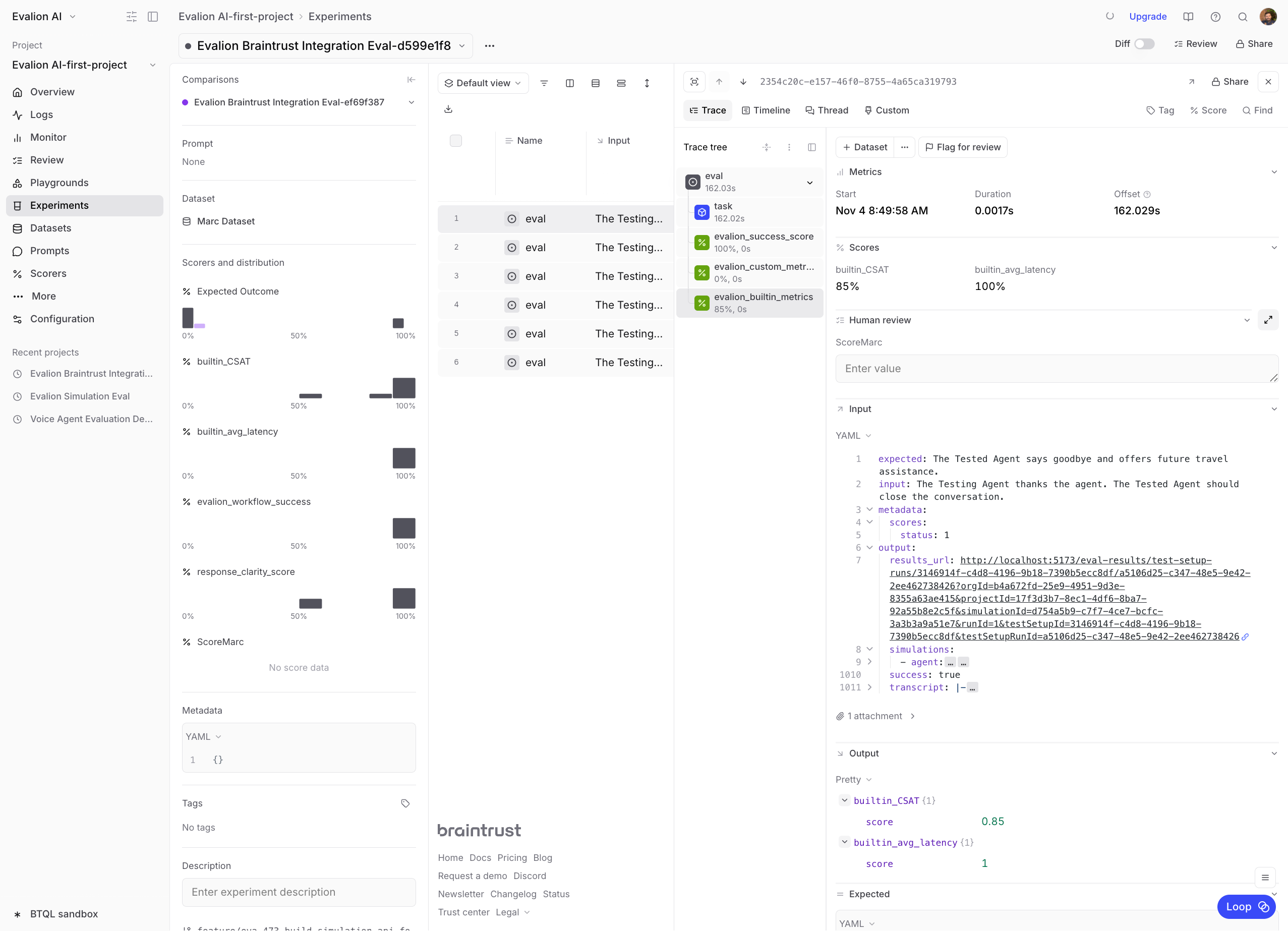

After running evaluations, navigate to Experiments in Braintrust to analyze your results. You’ll see metrics like average latency, CSAT scores, and goal completion rates across all test scenarios.

Next steps

Now that you have a working evaluation pipeline, you can:- Expand test coverage: Add more scenarios covering edge cases

- Iterate on prompts: Adjust your agent’s prompt and compare results

- Monitor production: Set up online evaluation for live traffic

- Track trends: Use Braintrust’s experiment comparison to identify improvements

- Evaluating a voice agent with OpenAI Realtime API

- Building reliable AI agents with tool calling